Total least squares

Total least squares, also known as errors in variables, rigorous least squares, or (in a special case) orthogonal regression, is a least squares data modeling technique in which observational errors on both dependent and independent variables are taken into account. It is a generalization of Deming regression, and can be applied to both linear and non-linear models.

Contents |

Linear model

Background

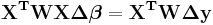

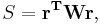

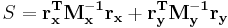

In the least squares method of data modeling, the objective function, S,

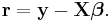

is minimized, where r is the vector of residuals and W is a weighting matrix. In linear least squares the model contains equations which are linear in the parameters appearing in the parameter vector  , so the residuals are given by

, so the residuals are given by

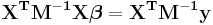

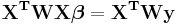

There are m observations in y and n parameters in β with m>n. X is a m×n matrix whose elements are either constants or functions of the independent variables, x. The weight matrix, W, is, ideally, the inverse of the variance-covariance matrix,  , of the observations, y. The independent variables are assumed to be error-free. The parameter estimates are found by setting the gradient equations to zero, which results in the normal equations [note 1]

, of the observations, y. The independent variables are assumed to be error-free. The parameter estimates are found by setting the gradient equations to zero, which results in the normal equations [note 1]

Allowing observation errors in all variables

Now, suppose that both x and y are observed subject to error, with variance-covariance matrices  and

and  respectively. In this case the objective function can be written as

respectively. In this case the objective function can be written as

where  and

and  are the residuals in x and y respectively. Clearly these residuals cannot be independent of each other, but they must be constrained by some kind of relationship. Writing the model function as

are the residuals in x and y respectively. Clearly these residuals cannot be independent of each other, but they must be constrained by some kind of relationship. Writing the model function as  , the constraints are expressed by m condition equations.[1]

, the constraints are expressed by m condition equations.[1]

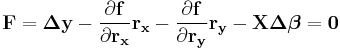

Thus, the problem is to minimize the objective function subject to the m constraints. It is solved by the use of Lagrange multipliers. After some algebraic manipulations,[2] the result is obtained.

, or alternatively

, or alternatively

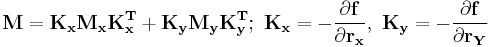

Where M is the variance-covariance matrix relative to both independent and dependent variables.

Example

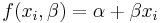

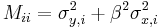

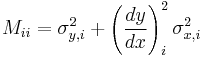

In practice these equations are easy to use. When the data errors are uncorrelated, all matrices M and W are diagonal. Then, take the example of straight line fitting.

It is easy to show that, in this case

showing how the variance at the ith point is determined by the variances of both independent and dependent variables and by the model being used to fit the data. The expression may be generalized by noting that the parameter  is the slope of the line.

is the slope of the line.

An expression of this type is used in fitting pH titration data where a small error on x translates to a large error on y when the slope is large.

Algebraic point of view

First of all it is necessary to note that the TLS problem does not have a solution in general, which was already shown in[3] The following considers the simple case where a unique solution exists without making any particular assumptions.

The computation of the TLS using singular value decomposition is described in standard texts.[4] We can solve the equation

for X where A is m-by-n and B is m-by-k.

That is, we seek to find X that minimizes error matrices E and F for A and B respectively. That is,

where ![[E\; F]](/2012-wikipedia_en_all_nopic_01_2012/I/600fd5915819c8adc3a66c47bcd85cc1.png) is the augmented matrix with E and F side by side and

is the augmented matrix with E and F side by side and  is the Frobenius norm, the square root of the sum of the squares of all entries in a matrix and so equivalently the square root of the sum of squares of the lengths of the rows or columns of the matrix.

is the Frobenius norm, the square root of the sum of the squares of all entries in a matrix and so equivalently the square root of the sum of squares of the lengths of the rows or columns of the matrix.

This can be rewritten as

![[(A%2BE) \; (B%2BF)] \begin{bmatrix} X\\ -I_k\end{bmatrix} = 0](/2012-wikipedia_en_all_nopic_01_2012/I/52e71313b7e701e63a94e5deec62d3f0.png) .

.

where  is the

is the  identity matrix. The goal is then to find

identity matrix. The goal is then to find ![[E\; F]](/2012-wikipedia_en_all_nopic_01_2012/I/600fd5915819c8adc3a66c47bcd85cc1.png) that reduces the rank of

that reduces the rank of ![[A\; B]](/2012-wikipedia_en_all_nopic_01_2012/I/8e33951085dd0e1a56d9a92df31c0f05.png) by k. Define

by k. Define ![[U] [\Sigma] [V]*](/2012-wikipedia_en_all_nopic_01_2012/I/d9cd7bae80b225774e92049a1c3ed72e.png) to be the singular value decomposition of the augmented matrix

to be the singular value decomposition of the augmented matrix ![[A\; B]](/2012-wikipedia_en_all_nopic_01_2012/I/8e33951085dd0e1a56d9a92df31c0f05.png) .

.

where V is partitioned into blocks corresponding to the shape of A and B.

The rank is reduced by setting some of the singular values to zero. That is, we want

so by linearity,

![[E\; F] = -[U_A\; U_B] \begin{bmatrix}0_{n\times n} &0 \\ 0 & \Sigma_B\end{bmatrix}\begin{bmatrix}V_{AA} & V_{AB} \\ V_{BA} & V_{BB}\end{bmatrix}^*](/2012-wikipedia_en_all_nopic_01_2012/I/995b7ae7a7b9a6a936192c14c0133033.png) .

.

We can then remove blocks from the U and Σ matrices, simplifying to

![[E\; F] = -U_B\Sigma_B \begin{bmatrix}V_{AB}\\V_{BB}\end{bmatrix}^*= -[A\; B] \begin{bmatrix}V_{AB}\\V_{BB}\end{bmatrix}\begin{bmatrix}V_{AB}\\ V_{BB}\end{bmatrix}^*](/2012-wikipedia_en_all_nopic_01_2012/I/ddc242c95eb89210162593af7d2d412c.png) .

.

This provides E and F so that

![[(A%2BE) \; (B%2BF)] \begin{bmatrix}V_{AB}\\ V_{BB}\end{bmatrix} = 0](/2012-wikipedia_en_all_nopic_01_2012/I/07eb9166e9878ba6d012aa95c427b256.png) .

.

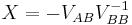

Now if  is nonsingular, which is not always the case (note that the behavior of TLS when

is nonsingular, which is not always the case (note that the behavior of TLS when  is singular is not well understood yet), we can then right multiply both sides by

is singular is not well understood yet), we can then right multiply both sides by  to bring the bottom block of the right matrix to the negative identity.

to bring the bottom block of the right matrix to the negative identity.

and so

.

.

A naive GNU Octave implementation of this is:

function X = tls(xdata,ydata) m = length(ydata) %number of x,y data pairs A = [ones(m,1) xdata]; B = ydata; n = size(A,2); % n is the width of A (A is m by n) C = [A B]; % C is A augmented with B. [U S V] = svd(C,0); % find the SVD of C. VAB = V(1:n,1+n:end); % Take the block of V consisting of the first n rows and the n+1 to last column VBB = V(1+n:end,1+n:end); % Take the bottom-right block of V. X = -VAB/VBB; end

The above described way of solving the problem, provided by nonsingularity of matrix  , can be slightly extended by so called classical TLS algorithm.[6]

, can be slightly extended by so called classical TLS algorithm.[6]

Computation

The standard implemenation of classical TLS algorithm is available through Netlib, see also.[7][8] All modern implementations based, for example, on solving a sequence of ordinary least squares problems, approximate the matrix  introduced by Van Huffel and Vandewalle. It is worth noting, that this

introduced by Van Huffel and Vandewalle. It is worth noting, that this  is, however, not the TLS solution in many cases.[9][10]

is, however, not the TLS solution in many cases.[9][10]

Non-linear model

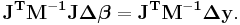

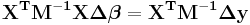

For non-linear systems similar reasoning shows that the normal equations for an iteration cycle can be written as

Geometrical interpretation

When the independent variable is error-free a residual represents the "vertical" distance between the observed data point and the fitted curve (or surface). In total least squares a residual represents the distance between a data point and the fitted curve measured along some direction. In fact, if both variables are measured in the same units and the errors on both variables are the same, then the residual represents the shortest distance between the data point and the fitted curve, that is, the residual vector is perpendicular to the tangent of the curve. For this reason, this type of regression is sometimes called "two dimensional Euclidean regression" (Stein, 1983) .[11]

A serious difficulty arises if the variables are not measured in the same units. First consider measuring distance between a data point and the curve - what are the measurement units for this distance? If we consider measuring distance based on Pythagoras' Theorem then it is clear that we shall be adding quantities measured in different units, and so this leads to meaningless results. Secondly, if we rescale one of the variables e.g., measure in grams rather than kilograms, then we shall end up with different results (a different curve). To avoid this problem of incommensurability it is sometimes suggested that we convert to dimensionless variables—this may be called normalization or standardization. However there are various ways of doing this, and these lead to fitted models which are not equivalent to each other. One approach is to normalize by known ( or estimated ) measurement precision thereby minimizing the Mahalanobis distance from the points to the line, providing a maximum-likelihood solution.

Scale invariant methods

In short, total least squares does not have the property of units-invariance (it is not scale invariant). For a meaningful model we require this property to hold. A way forward is to realise that residuals (distances) measured in different units can be combined if multiplication is used instead of addition. Consider fitting a line: for each data point the product of the vertical and horizontal residuals equals twice the area of the triangle formed by the residual lines and the fitted line. We choose the line which minimizes the sum of these areas. Nobel laureate Paul Samuelson proved that it is the only line which possesses a set of certain desirable properties which includes scale invariance and invariance under interchange of variables (Samuelson, 1942).[12] This line has been rediscovered in different disciplines and is variously known as the reduced major axis, the geometric mean functional relationship (Draper and Smith, 1998),[13] least products regression, diagonal regression, line of organic correlation, and the least areas line. Tofallis (2002) [14] has extended this approach to deal with multiple variables.

See also

- Deming regression, a special case with two predictors and independent errors

- Errors-in-variables model

- Linear regression

- Least squares

Notes

- ^ An alternative form is

, where

, where  is the parameter shift from some starting estimate of

is the parameter shift from some starting estimate of  and

and  is the difference between y and the value calculated using the starting value of

is the difference between y and the value calculated using the starting value of

References

- ^ W.E. Deming, Statistical Adjustment of Data, Wiley, 1943

- ^ P. Gans, Data Fitting in the Chemical Sciences, Wiley, 1992

- ^ G. H. Golub and C. F. Van Loan, An analysis of the total least squares problem. Numer. Anal., 17, 1980, pp. 883-893.

- ^ Gene H. Golub and Charles F. Van Loan (1996). Matrix Computations (3rd ed.). The Johns Hopkins University Press. pp 596.

- ^ http://books.google.com/books?hl=en&lr=&id=ZecsDBMz5-IC&oi=fnd&pg=PA1&dq=%22Bj%C3%B6rck%22+%22Numerical+methods+for+least+squares+problems%22+&ots=pu0dFsSLI-&sig=c31aDGk0vpMO_I32ppLCKzZKRHM#PPA181,M1

- ^ S. Van Huffel and J. Wandewalle, The Total Least Squares Problems: Compational Aspects and Analysis. SIAM Pulications, Philadelphia PA, 1991.

- ^ S. Van Huffel, Documented Fortran 77 programs of the extended classical total least squares algorithm, the partial singular value decomposition algorithm and the partial total least squares algorithm, Internal Report ESAT-KUL 88/1, ESAT Lab., Dept. of Electrical Engineering, Katholieke Universiteit Leuven, 1988.

- ^ S. Van Huffel, The extended classical total least squares algorithm, J. Comput. Appl. Math., 25, pp. 111-119, 1989.

- ^ M. Plešinger, The Total Least Squares Problem and Reduction of Data in AX ≈ B. Doctoral Thesis, TU of Liberec and Institute of Computer Science, AS CR Prague, 2008. Ph.D. Thesis

- ^ I. Hnětynková, M. Plešinger, D. M. Sima, Z. Strakoš, and S. Van Huffel, The total least squares problem in AX ≈ B. A new classification with the relationship to the classical works. Submitted to SIMAX, 2010.

- ^ Yaakov (J) Stein. Two Dimensional Euclidean Regression. http://www.dspcsp.com/pubs/euclreg.pdf.

- ^ Paul A. Samuelson (1942). "A Note on Alternative Regressions". Econometrica (The Econometric Society) 10 (1): 80–83. doi:10.2307/1907024. JSTOR 1907024.

- ^ Draper, NR and Smith, H. Applied Regression Analysis, 3rd edition,pp.92-96. 1998

- ^ Model fitting for multiple variables by minimising the geometric mean deviation Downloadable from: http://papers.ssrn.com/sol3/papers.cfm?abstract_id=1077322

Others

- I. Hnětynková, M. Plešinger, D. M. Sima, Z. Strakoš, and S. Van Huffel, The total least squares problem in AX ≈ B. A new classification with the relationship to the classical works. Submitted to SIMAX, 2010, preprint

- M. Plešinger, The Total Least Squares Problem and Reduction of Data in AX ≈ B. Doctoral Thesis, TU of Liberec and Institute of Computer Science, AS CR Prague, 2008. Ph.D. Thesis

- I. Markovsky and S. Van Huffel, Overview of total least squares methods. Signal Processing, vol. 87, pp. 2283–2302, 2007. preprint

- C. C. Paige, Z. Strakoš, Core problems in linear algebraic systems. SIAM J. Matrix Anal. Appl. 27, 2006, pp. 861–875.

- S. Van Huffel and P. Lemmerling, Total Least Squares and Errors-in-Variables Modeling: Analysis, Algorithms and Applications. Dordrecht, The Netherlands: Kluwer Academic Publishers, 2002.

- S. Jo and S. W. Kim, Consistent normalized least mean square filtering with noisy data matrix. IEEE Trans. Signal Processing, vol. 53, no. 6, pp. 2112–2123, Jun. 2005.

- R. D. DeGroat and E. M. Dowling, The data least squares problem and channel equalization. IEEE Trans. Signal Processing, vol. 41, no. 1, pp. 407–411, Jan. 1993.

- S. Van Huffel and J. Vandewalle, The Total Least Squares Problems: Compational Aspects and Analysis. SIAM Pulications, Philadelphia PA, 1991.

- T. Abatzoglou and J. Mendel, Constrained total least squares, in Proc. IEEE Int. Conf. Acoust., Speech, Signal Process. (ICASSP’87), Apr. 1987, vol. 12, pp. 1485–1488.

- P. de Groen An introduction to total least squares, in Nieuw Archief voor Wiskunde, Vierde serie, deel 14, 1996, pp. 237–253 arxiv.org.

- G. H. Golub and C. F. Van Loan, An analysis of the total least squares problem. SIAM J. on Numer. Anal., 17, 1980, pp. 883–893.

- Perpendicular Regression Of A Line at MathPages

|

||||||||||||||||||||||||||||||||||||||||||||||

![\mathrm{argmin}_{E,F} \| [E\; F] \|_F, \qquad (A%2BE) X = B%2BF](/2012-wikipedia_en_all_nopic_01_2012/I/4b7a92bbc63258952c8b2a0ca8a431ff.png)

![[A\; B] = [U_A\; U_B] \begin{bmatrix}\Sigma_A &0 \\ 0 & \Sigma_B\end{bmatrix}\begin{bmatrix}V_{AA} & V_{AB} \\ V_{BA} & V_{BB}\end{bmatrix}^* = [U_A\; U_B] \begin{bmatrix}\Sigma_A &0 \\ 0 & \Sigma_B\end{bmatrix} \begin{bmatrix} V_{AA}^* & V_{BA}^* \\ V_{AB}^* & V_{BB}^*\end{bmatrix}](/2012-wikipedia_en_all_nopic_01_2012/I/73c77d4405750717c0280ca79fd5b146.png)

![[(A%2BE)\; (B%2BF)] = [U_A\; U_B] \begin{bmatrix}\Sigma_A &0 \\ 0 & 0_{k\times k}\end{bmatrix}\begin{bmatrix}V_{AA} & V_{AB} \\ V_{BA} & V_{BB}\end{bmatrix}^*](/2012-wikipedia_en_all_nopic_01_2012/I/97ca6eb76203b19d1883835f77f25c82.png)

![[(A%2BE) \; (B%2BF)] \begin{bmatrix} -V_{AB} V_{BB}^{-1} \\ -V_{BB} V_{BB}^{-1}\end{bmatrix} = [(A%2BE) \; (B%2BF)] \begin{bmatrix} X\\ -I_k\end{bmatrix} = 0](/2012-wikipedia_en_all_nopic_01_2012/I/744c46ddfb3f27e6748a4653a889e0af.png)